Jonathan Preston, associate professor of communication sciences and disorders, is a co-investigator on two grants from the National Institutes of Health which address childhood speech sound disorders. Preston is collaborating on the two separate projects with researchers from Montclair State University and New York University.

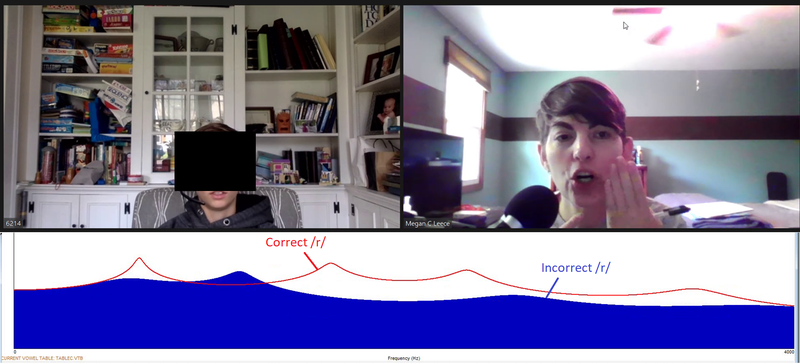

The first project, titled “Online Assessment and Enhancement of Auditory Perception for Speech Sound Errors,” will assess the effectiveness of visual-acoustic biofeedback as a speech therapy procedure when delivered through teletherapy for children ages 9 to 15 with persisting speech errors. Visual-acoustic biofeedback therapy involves the use of software to convert sound into a real-time visual display. For example, when a child pronounces an /r/ sound, the graph will display the difference between a clearly produced /r/ sound and a distortion of the sound. Speech-language pathologists can then use that visual component to show children graphically how moving their mouth can change the way they pronounce different sounds.

Speech-language pathologist Megan Leece, right, providing teletherapy to a client using a visual feedback graph.

As part of the project, speech-language pathologists at the Speech Production Lab at Syracuse University will conduct speech therapy remotely with children who have persisting speech sound disorders. Their work on this project is particularly timely as the COVID-19 pandemic has increased telepractice delivery of speech therapy services.

“This is a specialized approach to treatment that, if effective via teletherapy, would increase access to effective treatment for children with speech disorders,” says Preston. “Families would not need to live near a specialist who uses this treatment but could remotely connect to a speech-language pathologist who is skilled at using visual-acoustic software in speech therapy.”

The team is also developing a computerized method to assess and treat speech perception (listening) skills in children with speech disorders, as there is evidence that poor perception is related to speech production difficulties.

On a second NIH-funded project, titled “Biofeedback-Enhanced Treatment for Speech Sound Disorder: Randomized Control Trial and Delineation of Sensorimotor Subtypes Supplement CSD,” Preston and his collaborators are comparing three speech therapy procedures to treat children with /r/ production difficulties.

At the Speech Production Lab researchers will work with Syracuse-area children to test each procedure, which include a traditional motor-based therapy involving cueing children to change the position of their tongue to produce /r/, visual-acoustic biofeedback, and ultrasound biofeedback, which creates a real-time display of the tongue during speech and can be used to help children see the shape and position of their tongue when producing a clear or a distorted /r/.

“We hope to determine whether children make faster progress in their speech production when given biofeedback treatments compared to traditional motor-based therapy,” says Preston. “We are also trying to determine if there are individual predictors of which children will respond best to each type of speech therapy.”

This research builds on Preston’s ongoing work to explore causes and treatments for speech sound disorders, with a focus on persisting articulation errors. Find out more at the Speech Production Lab website.